Tracking my Cat with Frigate NVR

Previosly, I built a home security camera system using ZoneMinder. Somewhat dissatisfied with the status quo of ZoneMinder, I set out to try a brand new security NVR - Frigate - and see if an NVR written specifically to integrate into Home Assistant could be used for more than just recording and viewing camera footage.

The Old System⌗

I am using the same Dahua cameras I installed in the ZoneMinder System. In fact, I ran both Zoneminder and Frigate for about a month while I validated functionality of the new system, using the same ZFS pool! Read that project page for information on the cameras I chose and why. There may be newer cameras available now, but I’m still satisfied with the image quality from what I already have.

Since writing the article on the old system, I added a few cameras in ZoneMinder just for viewing in the app - the USB security camera connected to my 3D printer’s OctoPrint setup, and the PoE camera I got to monitor my 3D printer (Project Here).

Setting Up the VM⌗

I setup a new Ubuntu VM on my Minilab to run Frigate. Without an AI accelerator, and only giving it 4 vcpu cores so it doesn’t starve Home Assistant, I was able to comfortably run ai inferencing on two cameras and not really on 3. My goal was to run ai on the close-up door cameras at a high enough resolution to detect the cat, which means I can’t run at the low-res D1 (704x480) sub stream on my 1440p cameras and either have to drop the 1440p main stream down and reduce the main stream resolution to the resolution I can do AI at, or do AI at full 1440p main stream. Hence the massive CPU penalty. I was okay running the D1 stream for the far shot cameras where I was more focused on car and person detect, and both cars and people are significantly larger than cats. With this in mind, I ordered two Coral AI accelerators to fit in the two M.2 slots of Minilab.

As you can read in the Minilab project, I really struggled with PCIe passthrough on Xen, and even a bit once I moved to Proxmox, but it ended up working out, and I found myself with a brand new Ubuntu VM with two working Coral AI accelerators for use. I still gave it 4 vcpu cores and 4G of RAM, which is hopefully enough for the full camera system. I setup Docker according to the Docker install guide for Ubuntu, and was off to the races.

Setting up Frigate⌗

At this point, I have the 5 Dahua cameras I setup for the ZoneMinder System, the OctoPrint raspberry pi and it’s USB webcam, and the wide angle PoE camera watching the 3D printer. I setup my Ubuntu VM to load the Frigate directory off a dedicated zfs dataset from the file server, and that includes storage of recordings, clips, and the config.yml file. I relocated the database file off the remote zfs dataset onto the VM’s 30GB OS drive due to file locking issues with Samba shares. I wrote up a config file I was happy with, assuming the Coral AI was all powerful (and I had two of them), using the 5 Dahua cameras and the wide angle PoE camera. I quickly learned a lot:

- Frigate does not let you create a camera without a detect behavior, at all. It really wants to try object detection, and I don’t want to do that for my 3D printer cameras.

- I expected a bit too much from the little Coral AI chip, even with two of them. Trying to run 4 cameras at 1440p, 15fps analysis and one at 1080p (the 4K camera which supports higher resolution sub-streams), it was not happy.

- ffmpeg decoding of so many high resolution streams at high framerate is quite CPU intensive without the ability to offload it to a GPU, and I don’t feel comfortable giving up my only way to access the Proxmox console physically to pass-through the iGPU.

After struggling for a bit, having learned a lot, I decided on a much more reasonable configuration:

- The two door cameras will run analysis at 5fps on the 1440p main stream. Only the main stream is used for these cameras. They have person and cat detection enabled.

- The two wide field 1440p cameras will run analysis at 5fps on the D1 sub stream, using the D1 stream only for detection and the main stream for everything else. These cameras have car and person detection enabled

- The 4K varifocal camera will run analysis at 5fps on a 720p sub stream, using the 4K stream for recording and clips and the 720p stream for real-time viewing and detection. This camera has car, person, and cat detection enabled, although I don’t think the resolution is high enough to pick out cats, I want to give it a try.

- The 3D printer cameras were added to Home Assistant directly, and skip Frigate entirely. HA manages the (re)streaming of these cameras to the HA app and web UI.

Cat Detection⌗

As promised, I really wanted to track my cat. Frigate delivered. Here are some gems for you guys to enjoy:

| Front Door | Back Door |

|---|---|

|

|

| Staring into your soul | Off on an adventure |

|

|

| A skilled jumper | Supervising the humans |

|

|

| Leaving for fun | Relaxing in the shade |

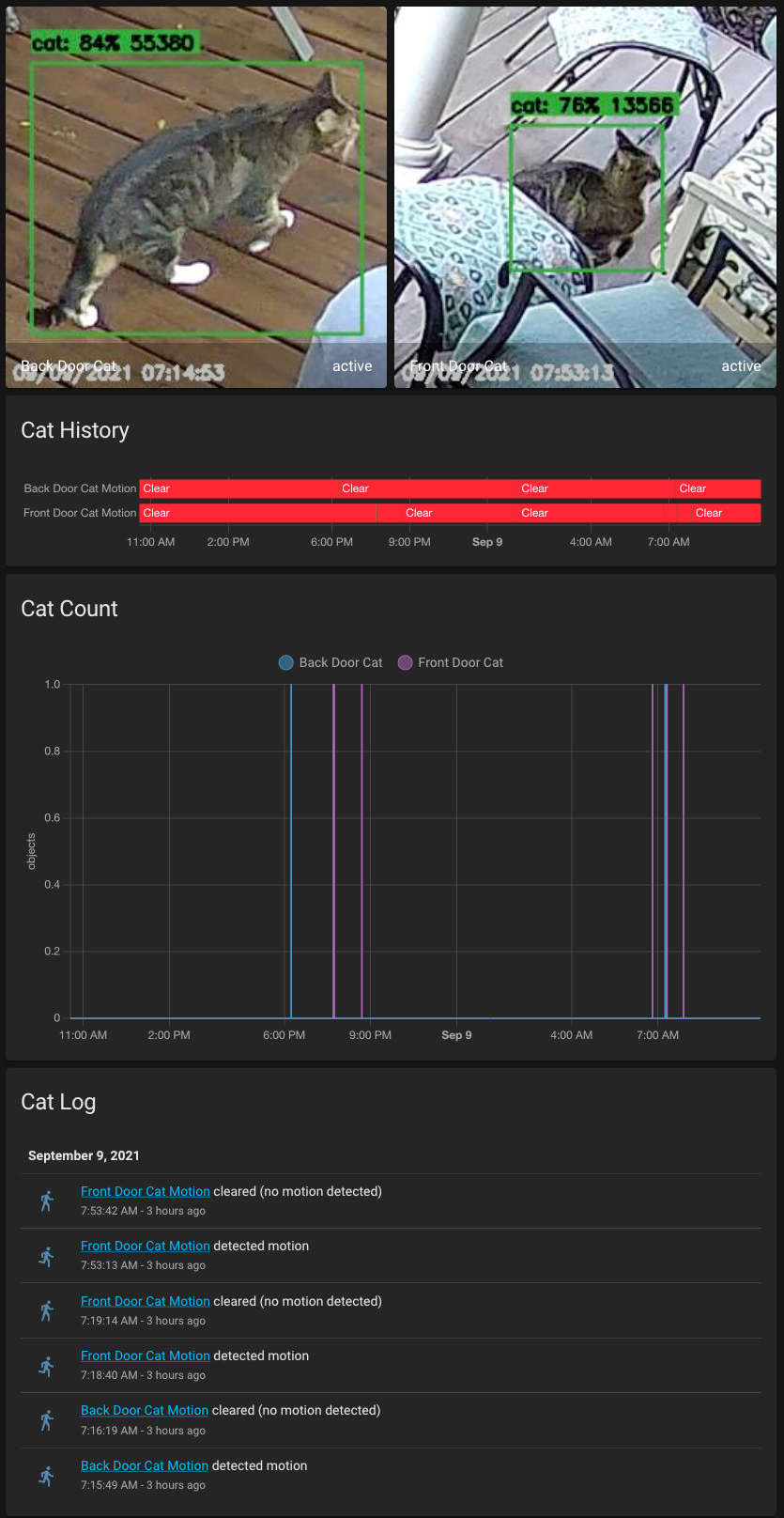

Lovelace View⌗

I added a tab to my HA Lovelace security page specifically for watching the cat

Final Thoughts⌗

Frigate is an excellent NVR and has earned its place in my network. Once I am satisfied with the recording and clip searching, I will deprecate ZoneMinder and switch to using Frigate exclusively for security cameras. I’m already impressed with how reliably Frigate rejects things like bugs, spiders, and swaying tree shadows, and feel like I can trust the filtered events more than ZoneMinder. I already have plans to automate exterior lighting based on person detection, so we don’t have to ’leave the light on’ for people coming home late, and Frigate should reduce false positives in this regard. More to come on this subject for sure.